Develop your website right – 2

February 3, 2018 • Glenn Murray

We’ve been through what you should do if you want to develop a website right, now here’s a checklist of what you should do only sparingly or not do at all. Hope it makes it all easier to grapple with.

DO SPARINGLY OR AVOID

DON’T

- Use text within images

- Rely too heavily on footer links for navigation

- Use empty hyperlinks with deferred hyperlink behavior

- Use Silverlight

- Use Frames or iFrames

- Use spamming techniques

AVOID DUPLICATE CONTENT

You have duplicate content when:

- you have more than one version of any page

- you reference any page with more than one URL

- someone plagiarizes your content

- you syndicate content

And it’s a problem for two reasons:

- Duplicate content filter – Let’s say there are two pages of identical content out there on the Web. Google doesn’t want to list both in the SERPs, because it’s after variety for searchers. The duplicate content filter identifies the pages, then Google applies intelligence to decide which is the original. It then lists only that one in the SERPs. The other one misses out. Problem is, Google may choose the wrong version to display in the SERPs. (There’s no such thing as a duplicate content penalty.)

- PageRank dilution – Some webmasters will link to one page/URL and some will link to another, so your PageRank is spread across multiple pages, instead of being focused on one. Note, however, that Google claims that they handle this pretty well, by consolidating the PageRank of all the links.

Below are some examples of duplicate content and how to resolve them.

MULTIPLE VERSIONS OF THE SAME PAGE

Multiple versions of the same page is clearly duplicate content. (e.g. A print-friendly version and the regular display version.) The risk is that Google may choose the wrong one to display in the SERPs.

Solutions:

Use a no_follow link to the print-friendly version. This will ensure that Google’s bots don’t crawl it, and that it won’t be indexed. The HTML of a nofollow link looks like this:

<a href="page1.htm" rel="nofollow">go to page 1</a>

Or use your robots.txt file to tell the search bots not to crawl the print friendly version.

MULTIPLE URLS FOR A SINGLE PAGE

Even though there’s really only one page, the search engines interpret each discrete URL as a different page. There are two common reasons for this problem:

- No canonical URL specified

- Referral tracking & visitor tracking

NO CANONICAL URL SPECIFIED

A canonical URL is the master URL of your home page. The one that displays whenever your home page displays. For most sites, it would be http://www.yourdomain.com.

Test if your site has a canonical URL specified. Open your browser and visit each of the following URLs (substituting your domain name, of course).

- http://www.yourdomain.com/

- http://yourdomain.com/

- http://www.yourdomain.com/index.html/ (or index.htm)

- http://yourdomain.com/index.html/ (or index.htm)

If your home page displays, but the URL stays exactly as you typed it, you have not specified a canonical URL, and you have duplicate content.

Solutions:

- Choose one of the above as your canonical URL. It doesn’t really matter which one. Then redirect the others to it with 301 redirects. (Your web developer should know how to set up a 301 redirect, but just in case, here’s a 301 Redirect How to…)

- Specify your preferred domain in Google Webmaster Tools (you have to register first). To do this, at the Dashboard, click your site, then click Settings and choose an option under Preferred domain. This is the equivalent of a 301 redirect for Google. But it has no impact on the other search engines, so you should still set up proper 301 redirects.

- Create and submit an open format (Google) sitemap and ensure that it uses only the appropriate (‘canonical’) URLs. (See ‘Create an open format / Google sitemap’ for more info.)

REFERRAL TRACKING & VISITOR TRACKING

If you’re storing referrer and/or user data in your URLs, your URLs will vary depending on who the visitor is and/or what link they clicked to arrive at your page. This may be the case if you manage a forum (e.g. a phpBB 2.x forum) or you participate in an affiliate program.

In addition to the duplicate content filter and PageRank dilution problems, this sort of duplicate content makes your site a ‘bot-trap’, significantly increasing the time it takes search engine bots to crawl your site. In Google’s words:

“Duplicated content can lead to inefficient crawling: when Googlebot discovers ten URLs on your site, it has to crawl each of those URLs before it knows whether they contain the same content (and thus before we can group them as described above). The more time and resources that Googlebot spends crawling duplicate content across multiple URLs, the less time it has to get to the rest of your content.” (From the Google Webmaster Central Blog)

Solutions:

- If you host a forum on your site, find out if upgrading to the most recent version will resolve the problem. (e.g. phpBB 3.0 handles dynamic URLs in a search-friendly way.)

- Devise an appropriate strategy for referrer/visitor tracking. This is well beyond the scope of this book (and my expertise). Please see Nathan Buggia’s URL Referrer Tracking for more information.

WORDPRESS

WordPress causes a lot of duplicate content issues by naturally pointing to the same content with multiple different URLs. (e.g. A single post can be accessed through the blog’s home page, search results, date archives, author archives, category archives, etc. And each of these access points has a different URL.)

Solution: For advice on overcoming duplicate content issues on WordPress blogs, see ‘Avoid duplicate content issues in your blog’

SOMEONE HAS PLAGIARIZED YOUR CONTENT

If someone has plagiarized your content, Google may mistakenly identify their plagiarize version as the original. This is unlikely, however, because most webmasters who plagiarize content are unlikely to have a very credible, authoritative site.

Solution: You can contact the offender and ask that they remove the content, and you can also report the plagiarism to Google (http://www.google.com/dmca.html). You can also proactively monitor who’s plagiarizing your content using Copyscape.

YOU SYNDICATE CONTENT

If you publish content on your site and also syndicate it, your site’s version may not appear in the SERPs. If one of the sites that has reprinted your article has more domain authority than yours, their syndicated version may appear in the SERPs instead of yours. Also, other webmasters may link to the syndicated version instead of yours.

Solution: One way to try and avoid this situation is to always publish the article on your site a day or two before you syndicate it. Another is to always link back to the original from the syndicated. Whatever the case, the backlink from the syndicated article still contributes to your ranking. You just may not get as much direct search- driven traffic to the article (which really isn’t the point of content syndication, anyway).

IF YOU USE WORDPRESS

WordPress is a free content management system. You can use it to create an entire website or just a blog, and have it appear at a URL of your choice. You can manage its appearance, write and publish blog posts, categorize and tag those posts, receive, moderate and publish comments, manage members, and set up RSS feeds so that other people can subscribe to your blog. It comes with virtually everything you need, inbuilt, and if there’s a feature that’s not inbuilt, it’s pretty likely you’ll be able to find a free plugin that does it.

WordPress isn’t the only CMS + blogging tool out there, but it’s definitely the most popular.

‘Out of the box,’ WordPress is naturally fairly search-friendly. Mostly because it makes writing and publishing lots of content really easy, and content’s half the SEO battle. But it’s far from perfect. There’s actually quite a bit to do in order to get it really search-friendly.

For more information on optimizing your blog posts, see ‘Optimize your web content’.

HOST YOUR OWN

Although you can get WordPress to host your blog on their servers, you should definitely host your own. Then you can just tack it onto your domain, ensuring you get full benefit of all backlinks. (Mine, for instance, is www.divinewrite.com.au/blog.) You can’t do that with a hosted version. Hosting your own is a bit more work, but it’s worth it.

ENSURE YOU HAVE FTP ACCESS TO YOUR WEB SERVER

In order to install the latest version of WordPress and the plugins referenced below, you’ll need FTP access to your web server. That means you’ll need an FTP client (I recommend FileZilla – it’s excellent and it’s free), and you’ll need to know your FTP host and login details. Your web host will be able to supply these.

INSTALL THE LATEST VERSION OF WORDPRESS

If you haven’t done so already, install the latest version of WordPress. Some of the below plugins may not work with earlier versions. (All my instructions below relate to Version 5.2.1 – the latest at the time of writing.) If you’re using an earlier version of WordPress, you’ll find upgrade instructions here. (Ensure you follow these instructions very carefully. They’re not overly difficult, but they’re detailed and time-consuming, and if you make a mistake, it can be costly.)

INSTALL & ACTIVATE ALL OF THESE WORDPRESS PLUGINS

Once WordPress is installed and you’ve tested that it’s working OK, you’ll need to install and activate a number of plugins. I’m not going to discuss the plugins here. Rather, in the sections following, wherever a plugin comes into play, I’ll explain how and why. For now, you’ll just have to trust me!

Note that each plugin has installation instructions either on the download page or in the downloaded readme.txt file. They’re all simple to install.

- Akismet

- Yoast WordPress SEO

- Redirection

- SEO Slugs

- Subscribe To Comments

- AddThis

- Yet Another Related Posts Plugin (YARPP)

If you have trouble installing or using any of these plugins, it may be that either your version of WordPress or your theme is incompatible. If you’re confident that the problem lies elsewhere, you should contact the developer of the plugin directly.

LINK TO RELATED CONTENT

When linking within your blog content, you can follow the same guidelines as you would for links within the rest of your site’s content. See ‘Optimize your internal link architecture’.

OPTIMIZE YOUR CATEGORIES FOR YOUR MAIN KEYWORDS

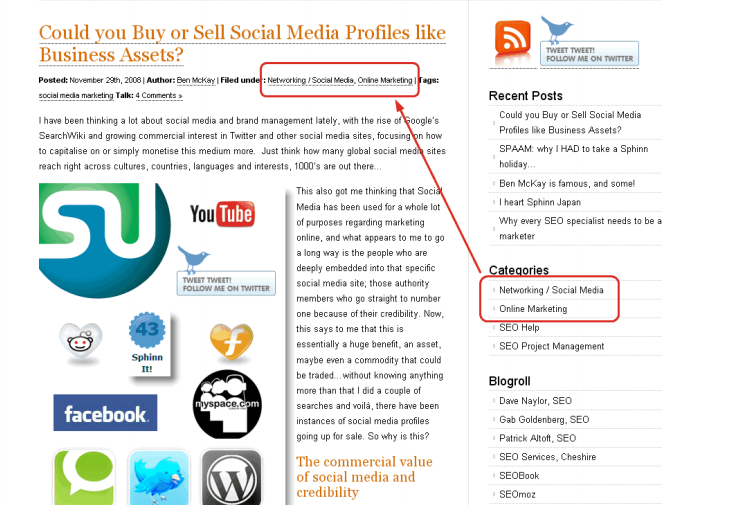

WordPress allows you to create categories to group related posts, to make it easy for visitors to find the information they’re after. By default, these categories are listed as links in the right sidebar on every page of your blog. What’s more, when you assign a post to a category, that category link is listed with the post as well.

Your chosen categories are listed with the post

For best SEO results, you should create a category for each of your main keywords. Because by the time you’ve completed all the steps in this section, your categories will actually be used as keywords (in the Keywords meta tag).

This arrangement gives you a series of target keywords on every page. (And they actually help your visitors!)

What’s more, this approach themes your blog, just as you’re theming your site (see here for more information on site theming).

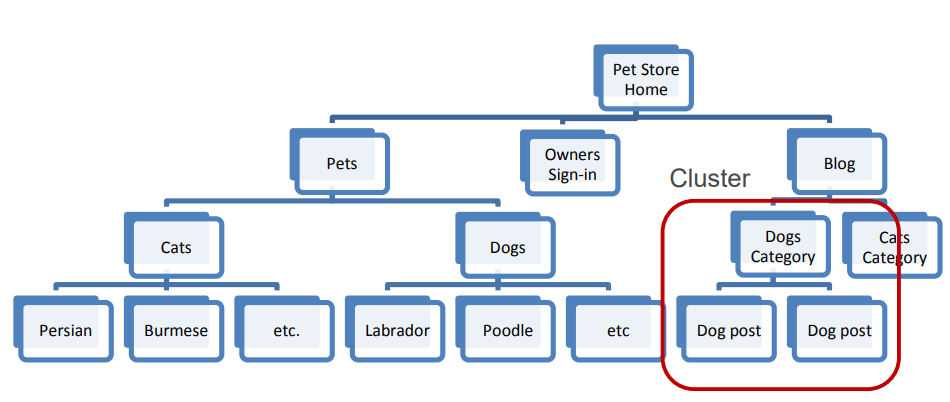

Clustering your blog posts around keywords (theming)

Oh, and you may have read elsewhere that you should limit yourself to only one category per post, but that’s only a problem if you’re not dealing properly with duplicate content. And you will be. (See ‘Avoid duplicate content issues in your blog’.)

OPTIMIZE YOUR BLOG TAGS FOR MINOR KEYWORDS

Tags are another way to group posts. They’re handy for incidental groupings that won’t add any visitor value in the right sidebar. E.g. Today on my blog, I wrote about the illogical notion of outsourcing your blog writing to cheap ghostwriters. I mentioned Twitter and Social Media in the post, but chose not to create a category for each, because those subjects aren’t core to my blog, and they’re not important keywords. Instead, I tagged the post with “twitter” and “social media.”

For best SEO, you need to make these tags display with your post, along with your Category links. This isn’t the default WordPress behavior; you need to configure it in your theme. I’m no programmer, but the following line of code in index.php did the trick for me. (I put it next to the ‘Posted in’ code.)

<?php the_tags('Tags: ', ', ', '<br />'); ?>

OPTIMIZE YOUR URLS

Although there’s no real consensus on whether it’s particularly beneficial to have your keywords in your URLs, it certainly can’t hurt.

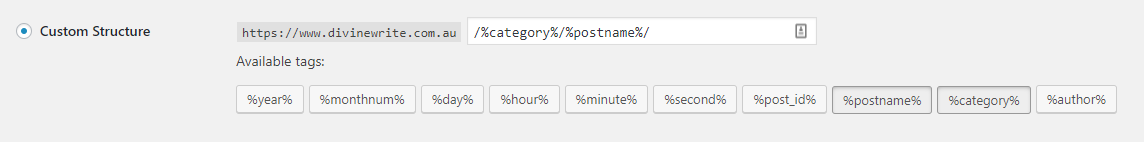

SWITCHING TO PRETTY PERMALINKS

WordPress allows you to use ‘Pretty Permalinks,’ so that instead of your URLs looking something like this:

www.yourdomain.com/blog/2008/11/09/10-reasons-cats-make-good-pets/

They’ll look something like this:

www.yourdomain.com/blog/cats/10-reasons-cats-make-good-pets/

or this:

www.yourdomain.com/blog/10-reasons-cats-make-good-pets/

IMPORTANT: When you switch to Pretty Permalinks, all your existing post URLs will change. But Google will still have all the existing URLs indexed. Also, all existing backlinks will still point to the old URLs (only new links will point to the new URLs). This will dilute the PageRank of the post. So you have to redirect the old URLs to the new ones. Be aware that this can take a lot of time.

To switch to Pretty Permalinks:

- Check the requirements for Pretty Permalinks

- In WordPress, Select Settings > Permalinks

- Paste one of the following into the Custom Structure field:

/%category%/%postname%/

(e.g. /cats/10-reasons-cats-make-good-pets/WordPress chooses the category based on the first category you selected when you posted (alphabetically).)

– OR –

/%postname%/

(e.g. /10-reasons-cats-make-good-pets/)

Switch to Pretty Permalinks

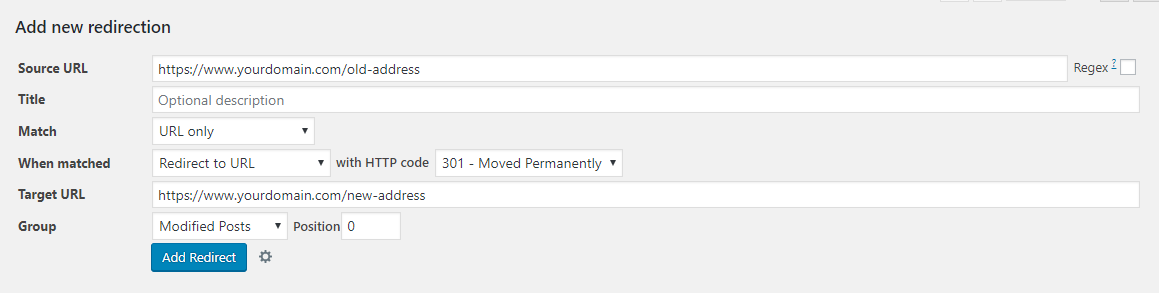

REDIRECTING YOUR OLD URLS

Because Google will still have all the existing URLs indexed, and all existing backlinks will still point to the old URLs, you have to redirect the old URLs to the new ones.

To redirect URLs:

- In WordPress, Select Tools > Redirection

- Ensure the Redirections Group is selected

- In the ‘Add new redirection section’, enter the old URL in the Source URL field

- Enter the new URL in the Target URL field

- Click Add Redirection

- Test that the redirection worked, by pasting the old URL into your browser’s address bar. If the redirection worked, the URL should change to the new one when the page loads

Redirect date-based URLs to Pretty Permalinks, using Redirection plugin

ELIMINATING COMMON FUNCTION WORDS

Switching to Pretty Permalinks also introduces another minor issue. Now that you’re using your blog post title as part of the URL, you’ll end up with heaps of words like, “the”, “and”, “if”, “but”, and so on. Fortunately, there’s a plugin built specifically to resolve this problem: SEO Slugs. All you have to do is install and activate it, and from then on, new post URLs will include only the important meaning words (many of which will be keywords). Note that it won’t change any of your existing URLs – only URLs of future posts.

AVOID DUPLICATE CONTENT ISSUES IN YOUR BLOG

If a single post is accessible through more than one URLs, you have duplicate content. The search engines see multiple URLs and assume multiple pages. This is a potential problem for two reasons: 1) the search engines have to guess at which one to display in the SERPs; and 2) when people link to your post, they may use different URLs, thus diluting the rightful PageRank of the post. In theory, Google does a pretty good job of reconciling duplicate content. But it’s not perfect. And the other search engines are likely worse.

Let’s say you publish a new post, and you assign it to three categories, and tag it with four tags. By default, this would mean that that single post can now be accessed via 11 different URLs! (i.e. Your blog’s Home URL, the main post URL, 3 x category URLs, 4 x tag URLs, the search results URL and your author archive URL).

In the face of so many choices, the search engines may have trouble deciding which one to display in the SERPs, and links from other websites are likely to be split a number of ways. Obviously, you don’t want this. You want the main post URL to rank, and to accumulate PageRank from backlinks. Not the version that displays when you click a Tag, Category, Archive, Author or search result link. (The main post URL is the one that’s invoked when you click on the post title from your blog’s home page. The other versions are known as ‘Archive’ pages.)

To overcome these issues, you need to:

- tell the search engines not to index the Archive pages;

- remove the Archive links from the right sidebar in your theme;

- disable the Archive pages; and

- provide a summary only on your blog’s home page and Archive pages.

DISABLE ARCHIVE PAGES & TELL THE SEARCH ENGINES NOT TO INDEX THEM

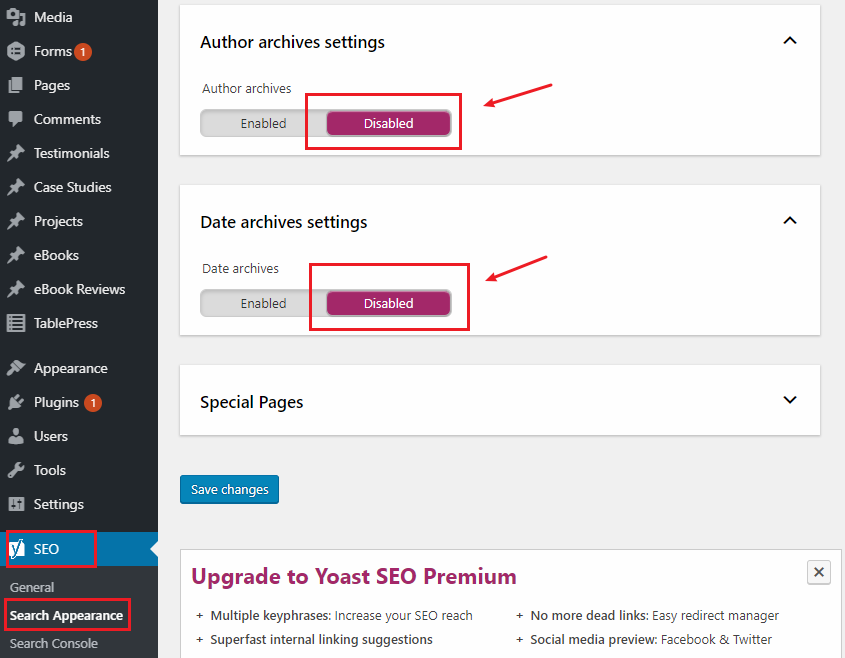

To disable author and date archives, and tell the search engines not to index them:

- In WordPress, go to SEO > Search Appearance > Archives

- Fill in the check boxes as follows. (Don’t change any of the other check boxes for now.)

Stop Google from indexing the Archive pages so only the main post page appears in the SERPs – Yoast WordPress SEO plugin

PROVIDE A SUMMARY ONLY ON YOUR BLOG’S HOME PAGE AND CATEGORY PAGES

For best SEO, you want everyone to be linking to the same version of each post, so that it accumulates as much PageRank as possible. If some people link to your home page, others link to your category page and yet others to the main post, your PageRank for that post may be greatly diluted.

To help ensure this doesn’t happen, you need to display just a summary on all but the main post. People will naturally link to the full version of your post (the main post), not the summary versions.

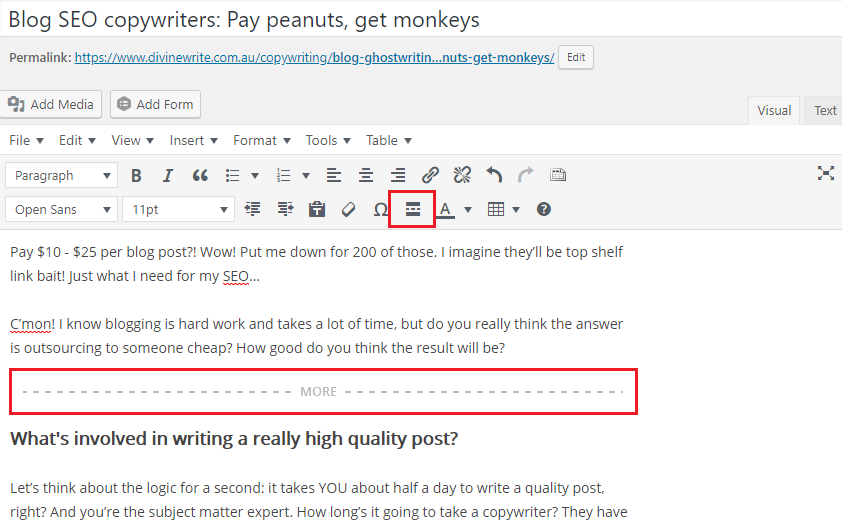

To display a summary only on all but the main post:

Note that your WordPress theme may do this automatically, in which case, you can ignore the following instruction.

- While you’re writing your post, position the cursor at the point where you want

the summary to end - Click the Insert Read More tag button. A “More” break will then be inserted.

Display a summary only on all but the main post

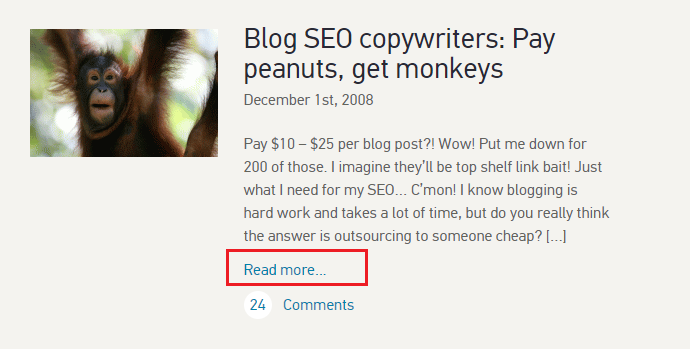

Your post will then appear as follows on your blog’s home page and on your category pages.

Summary post on the blog home page

For more information on formatting the read more… link, see Customizing the Read More.

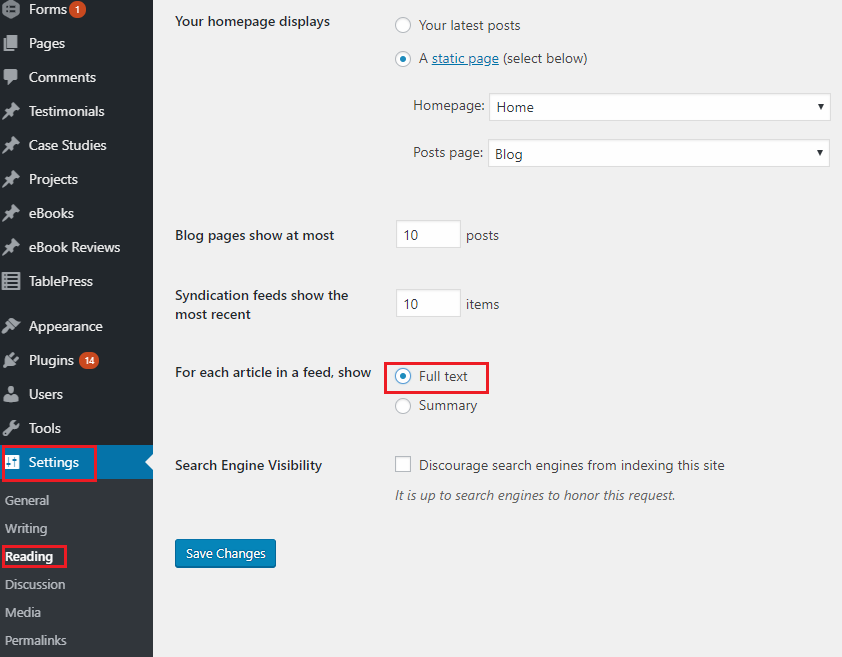

PUBLISH THE FULL TEXT OF EACH POST TO YOUR FEED

Finally, make sure you’re publishing the full text of each post to your feed, not just a summary. This reduces your subscribers’ workload, so they’re more likely to remain subscribers.

To publish the full text of each post to your feed:

- In WordPress, go to Settings > Reading

- Make sure ‘For each article, show: Full text’ is selected

Publish the full text of every post to your RSS feed

MAKE COMMENTING EASY

You want as many people to comment on your blog posts as possible. It’s great for your SEO (because it indicates that your posts are interesting, helpful and/or topical, and the search engines take this into consideration), and it’s excellent for engagement with your social media community. So you want to make it as easy as possible.

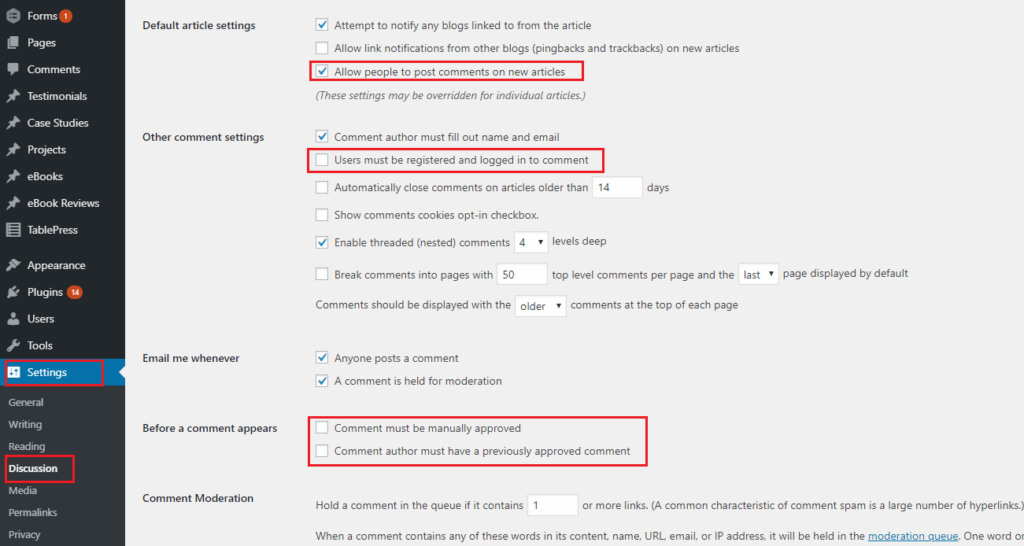

To turn on commenting & allow people to comment without registration or moderation:

- In WordPress, go to Settings > Discussion

- Check ‘Allow people to post comments on the article’

- Uncheck ‘An administrator must approve the comment (regardless of any matches below)’ and ‘Comment author must have a previously approved comment’. This will ensure that all posts are published without moderation (unless they contain more than two links, as discussed below).

- Uncheck ‘Users must be registered and logged in to comment’ so that anyone can comment.

Make commenting easy

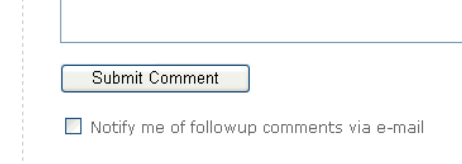

- If you haven’t done so already, install the Subscribe To Comments plugin. This plugin automatically inserts a ‘Notify me of follow-up comments via e-mail’ check box, so that people can elect to receive an email when someone posts a comment after they’ve posted theirs.

With the Subscribe to Comments plugin installed, visitors can elect to be notified of new comments

You should also include a prominent ‘Leave a comment’ link at the end of each post. People know they can leave comments, but it never hurts to prompt them! And make sure you always answer comments and optimize your answers for your keywords.

CONTROL COMMENT SPAM

Years ago, search spammers realized they could generate backlinks by commenting on other people’s blogs and including a link with their comment. To counter this, WordPress makes all links in comments ‘nofollow’, by default, so they pass on no PageRank. However, many spammers still try comment spam, so you need to do a couple of things to control it:

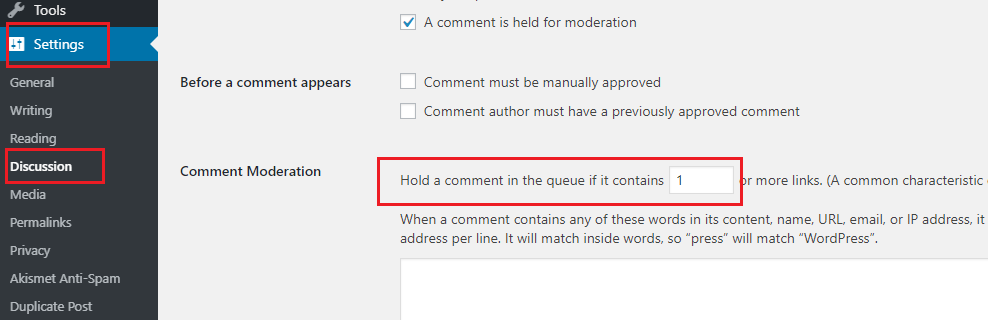

To control comment spam:

- Install Akismet. It will catch virtually all spam comment attempts, and store them for you to moderate at your leisure. I’ve been using it for years, and in all that time, it’s only ever missed a couple of spam attempts, and only incorrectly caught a couple of legitimate comments.

- In WordPress, go to Settings > Discussion

- Set ‘Comment Moderation’ to 1 link (i.e. if anyone posts a comment with a link, their comment will require moderation). This will catch anything that Akismet misses.

Control comment spam

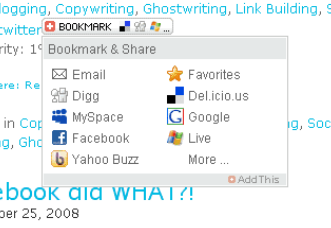

ADD A SOCIAL SHARING WIDGET PLUGIN

A social sharing widget makes it easy for readers to share your posts. This is very important, as many of your readers will be other bloggers, and most of them use social media.

Most WordPress themes include sharing buttons by default, but if yours doesn’t, you can add one manually.

Before I had a theme with sharing buttons, I used the AddThis plugin for my social bookmarking widget. Once installed, it automatically displays a button with each post that allows readers to choose their favorite social sharing service and quickly bookmark your post.

AddThis social sharing widget allows readers to choose their favorite service

OPTIMIZE YOUR POST TITLES (THE ON-PAGE HEADING)

Most WordPress themes handle this automatically, but always check to make sure your post titles are tagged <h1> in your code. You can check this by right clicking on your page and selecting View Source. Your post title should look something like this:

<h1>Paperclips: How to make a fortune from them</h1>

Also try to include at least one target keyword in your post title. (But don’t do this if it will significantly undermine the effectiveness of the headline. Remember, your headline has to draw the reader into your post. If it doesn’t do that, all your optimization efforts are wasted.)

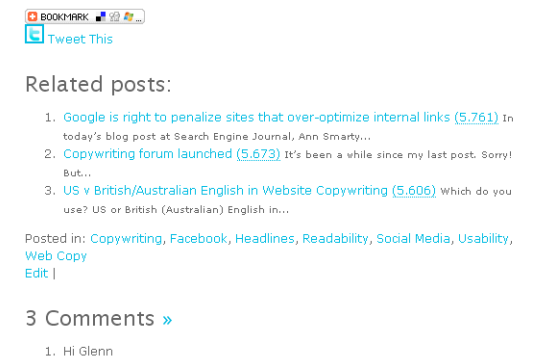

LINK TO RELATED POSTS

By linking to related posts, you’re simultaneously helping visitors and optimizing your page. Visitors will like you because the related posts are useful. And search engines will like you because you’re linking to something relevant, probably with keyword rich anchor text.

The easiest way I’ve seen to incorporate related posts is to use the Yet Another Related Posts Plugin (YARPP). (This is also the plugin recommended by Matt Cutts, the ‘Google Insider’.) Your list of related posts will then look something like the following.

Include links to related posts, with the YARPP plugin.

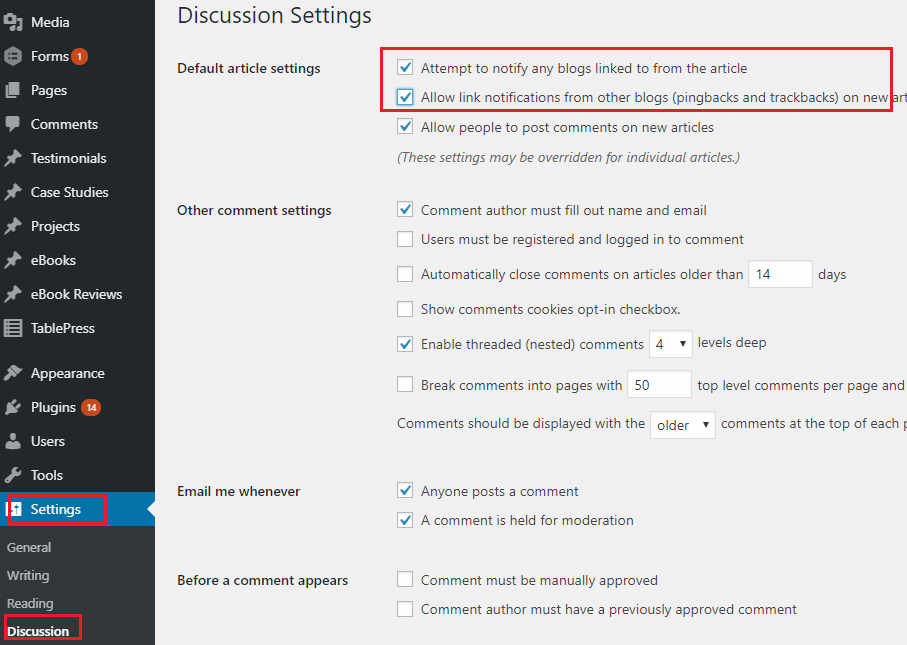

SUPPORT PINGBACKS &TRACKBACKS

A pingback is a notification that another blogger has linked to your post. When you receive a pingback, a snippet of their post will automatically display as a comment on yours, along with a (nofollow) link to their post. Displaying pingbacks in this way encourages more bloggers to link to your posts, and it also increases the perception of buzz around your content (readers will see that bloggers are linking to you). A trackback is much the same as a pingback, except that it takes a bit more work for the other blogger to do. As a result, they’re not as popular.

You should also allow WordPress to notify other blogs when you link to them with a Pingback or Trackback.

To support pingbacks & trackbacks:

- In WordPress, go to Settings > Discussion

- Tick both ‘Attempt to notify any blogs…’ and ‘Allow link notifications…’

Support pingbacks and trackbacks

For more information…

- on writing helpful blog posts, see ‘Writing useful, unique blog posts’, or buy Darren Rowse’s excellent ebook, ‘31 Days to Build a Better Blog’.

- on social sharing services and social media optimization, see ‘Generate ‘buzz’ about your content with Social Media’.

ADD YOUR BLOG TO GOOGLE WEBMASTER TOOLS

If you’ve already added your overall site to Google Webmaster Tools, you can ignore this step. If you haven’t (or if your blog is your entire site), you should add it now, by following Google’s instructions (for WordPress blogs).

AVOID FLASH

Google CAN read Flash (SWF files). A bit. But you should still be very wary of Flash if you want a high ranking. Below is a quick explanation of why.

- Google can’t read all types of JavaScript – So if your Flash file is invoked with JavaScript, it may not be read.

- Links in Flash may not be ‘follow-able’ – Tim Nash conducted 30 tests over 4 domains to see what information the search engines saw from a Flash file. His results suggest that links in Flash are stripped of anchor text and appear not to be followed.

- Other search engines can’t read Flash at all – Google isn’t the only search engine out there. It may be the biggest, but the others are still important. Yahoo has the ability to read Flash, but at the time of writing, it appears they still don’t. None of the others do.

- Many mobile phones don’t handle Flash well – Over the last year – thanks mostly to the release of the iPhone – mobile search has become very popular. But it’s still very much in its infancy, and many mobile phones don’t yet handle Flash properly.

- More technical reasons to avoid Flash – If you’re still not convinced, read Rand Fishkin’s Moz blog post, Flash and SEO – Compelling Reasons Why Search Engines & Flash Still Don’t Mix. Or read Vanessa Fox’s (ex-Google Webmaster blogger) blog post Search-Friendly Flash?

BUT IF YOU INSIST ON USING FLASH…

If you really, really, REALLY want to use Flash, despite all of the potential problems above, then at least make sure you make available an underlying text version of its content, complete with keyword-rich links. Just to be sure. And if it’s a whole page or a whole site, make sure you deliver it at the same URL, so there are no duplicate content issues.

For some more technical advice on optimizing Flash for search, read Jonathan Hochman’s article, How to SEO Flash, first.

BE CAREFUL WITH AJAX

AJAX enhanced sites can deliver a rich visitor experience, but they can also be very difficult for search engine bots to crawl. Following is a checklist to help you develop AJAX pages that are visitor AND search engine friendly.

- Develop your AJAX pages using ‘Progressive Enhancement’. In other words, create your structure and navigation in HTML, then add all the pretty stuff on top with AJAX (including JavaScript versions of your static HTML links – aka HIJAX). That way, the search engines will be able to see all the things that are important to them.

- Ensure your static links don’t contain a #, as search engines typically won’t read past it.

For more information on the technical ins and outs of using AJAX, see AJAX-enhanced sites, AJAX and Non-JavaScript Experiences for SEO friendly websites, Hijax and Progressive enhancement with Ajax.

AVOID JAVASCRIPT

Google can now read JavaScript to discover links within. But there’s no consensus about how much PageRank those links pass on to the pages they point to. The most credible comment I’ve read on this was by Rand Fishkin, back in 2007:

“…although JavaScript links are sometimes followed, they appear to provide only a fraction of the link weight that normal links grant.”

My advice is to steer clear of JavaScript for content and links, at least until there is general consensus in the SEO community that those links are treated exactly as standard HTML links are treated.

If you want navigation menus that drop down on mouse rollover, use standard rollovers and/or CSS formatting instead of JavaScript. Your developer will know what this means.

DON’T USE TEXT WITHIN IMAGES

Search engines can’t read words that are part of an image. If you have text in an image, and you can’t select it with your mouse, chances are Google won’t be able to read it. And if it can’t read it, it won’t know which searches to rank you in.

So don’t have your graphic designer lay out a beautiful page of copy and save it as a .gif or .jpg file, then upload it to your site. For all its beauty, it’ll be completely wasted on the search engines.

Your best bet is to present all important text as straight HTML text. You can get fancy with sIFR text replacement if you want, but that starts getting fairly complicated, so you’d want to have a pretty good reason.

DON’T RELY TOO HEAVILY ON FOOTER LINKS FOR NAVIGATION

Most visitors don’t pay too much attention to footer links. Not surprisingly, the search engines are following suit.

What’s more, Google has filed a patent application for “Systems and methods for analyzing boilerplate…” Although it may not actually use that technology to discount the impact of footer links, it’s certainly not out of the question. Remember Google tends to ignore the things visitors ignore, and to place great emphasis on the things they value.

I’m not saying don’t put any links down there; I’m saying don’t use them as your main form of navigation.

DON’T USE EMPTY HYPERLINKS WITH DEFERRED HYPERLINK BEHAVIOR

Make sure the targets of all your hyperlinks are real. Don’t use empty hyperlinks that have some sort of deferred behavior.

DON’T USE SILVERLIGHT

Just don’t do it. Perhaps things will change, but for now, if you want your pages to rank, steer clear of Silverlight. Straight from the horse’s (Google’s) mouth:

“…we still have problems accessing the content of other rich media formats such as Silverlight…In other words, even if we can crawl your content and it is in our index, it might be missing some text, content, or links.”

Simple.

DON’T USE FRAMES OR IFRAMES

Pages that use frames aren’t really single pages at all. Each frame on the page actually displays the content from an entirely different page. The frames and their content are all blended and arranged on the page you see according to the instructions found on another page entirely, called the ‘frameset’ page.

The problem with this is that search engine bots only see the ‘frameset’ page. They don’t see the page you see at all, nor the individual pages that make up the page you see. And this is where the problem lies. Those individual pages may have lots of really helpful, keyword rich content and links, and the search engines don’t see it.

Although you can use the “NoFrames” tag to provide alternate content that the search engines can read, you’ll still be undermining your SEO because the links and content within a frame aren’t considered part of the page they display on. This means there’s no alignment between backlinks pointing to that page and the content that page displays. In other words those backlinks won’t seem as relevant to the bots. Likewise, the links on the display page won’t pass on any PageRank to the pages they point to, because they actually exist on a page that doesn’t have a public URL, and which therefore doesn’t attract any backlinks.

DON’T USE SPAMMING TECHNIQUES

Before discussing what sorts of spam you should make sure you’re not engaging in, let me first say this: it’s almost impossible to spam unintentionally. Search engine spamming usually involves quite a bit of work and knowledge.

But just to be sure, here’s a quick look at what you shouldn’t be doing.

WHAT IS SEARCH ENGINE SPAM?

A website is considered search engine spam if it violates a specific set of rules in an attempt to seem like a better or more relevant website. In other words, if it tries to trick the search engines into thinking that it’s something it’s not.

ON-PAGE SPAM

On-page spam is deceptive stuff that appears on your website. According to Aaron D’Souza of Google, speaking at the October 2008 SMX East Search Marketing Conference, in New York City, the following are considered on-page spam:

- Cloaking – Showing one thing to search engines and something completely different to visitors.

- JavaScript redirects – Because search engines don’t usually execute complex JavaScript, some spammers will create a page that looks innocent and genuine to search engines, but when a visitor arrives, they’re automatically redirected to a page selling Viagra or Cialis, etc.

- Hidden content – Some webmasters just repeat their keywords again and again and again, on every page, then hide it from visitors. These keywords aren’t in sentences, they’re just words, and they provide no value. That’s why they’re hidden, and that’s why it’s considered spam. The intent is to trick the search engines into thinking that the site contains lots of keyword rich, helpful content, when, in fact, the keyword rich content is just keywords; nothing more. These spammers hide their keywords by using very, very, very small writing (1pt font), or by using a font color that’s the same as the background color.

- Keyword stuffing – Severely overdoing your keyword frequency. Just try to ensure you use your target keyword phrase more often than any other single word or phrase. If it feels like you’re using it too often, you probably are. If it feels contrived to you, it will to readers too. (See ‘How many times should I use a keyword?’ for more information on keyword frequency.)

- Doorway pages – Page after page of almost identical pages intended to simply provide lots and lots of keyword-rich content and links, without providing any genuine value to readers.

- Scraping – Spammers who are too lazy or incapable of creating their own content will steal it from other sites, blogs, articles and forums, then re-use it on their own site without permission, and without attributing it to its original author. The intent is to create lots of keyword rich content on their website, and trick the search engines into thinking their site is valuable, without actually doing any of the work themselves.

OFF-PAGE (LINK) SPAM

According to Google, the following link schemes are considered spam:

- Links intended to manipulate PageRank*

- Links to web spammers or bad neighborhoods on the web

- Excessive reciprocal links or excessive link exchanging

- Buying or selling links that pass PageRank

According to Sean Suchter of Yahoo (now with Microsoft), the search engines are always on the lookout for websites that:

“…get a LOT of really bad links, really fast.” (Speaking at the 2008 SMX East search marketing conference.)

They also look out for links out to bad sites.

But if “Links intended to manipulate PageRank” are spam, then every webmaster who follows Google’s own advice for improving the ranking of your website is spamming:

“In general, webmasters can improve the rank of their sites by increasing the number of high quality sites that link to their pages.”

Clearly, in point one above, Google is referring to people who are out-and-out spamming. Creating undeserved links that offer absolutely no value to visitors.

That concludes what we have to say if you want to develop your website right. I’m sure you’ll have more to add. Feel free to leave your comment and let’s talk.